India's value-for-money IT hardware brand

14 years. 28,000 sq ft. 100,000+ devices. From desktops to data center — designed, engineered, and manufactured in India.

Why choose RDPDesktops to data center, all Make in India

14 product categories across compute, AI, and data center. Deployment-ready from our 28,000 sq ft facility.

Download Product CatalogSovereign AI infrastructure

End-to-end AI compute under one sovereign umbrella. Designed here. Manufactured here. Supported here.

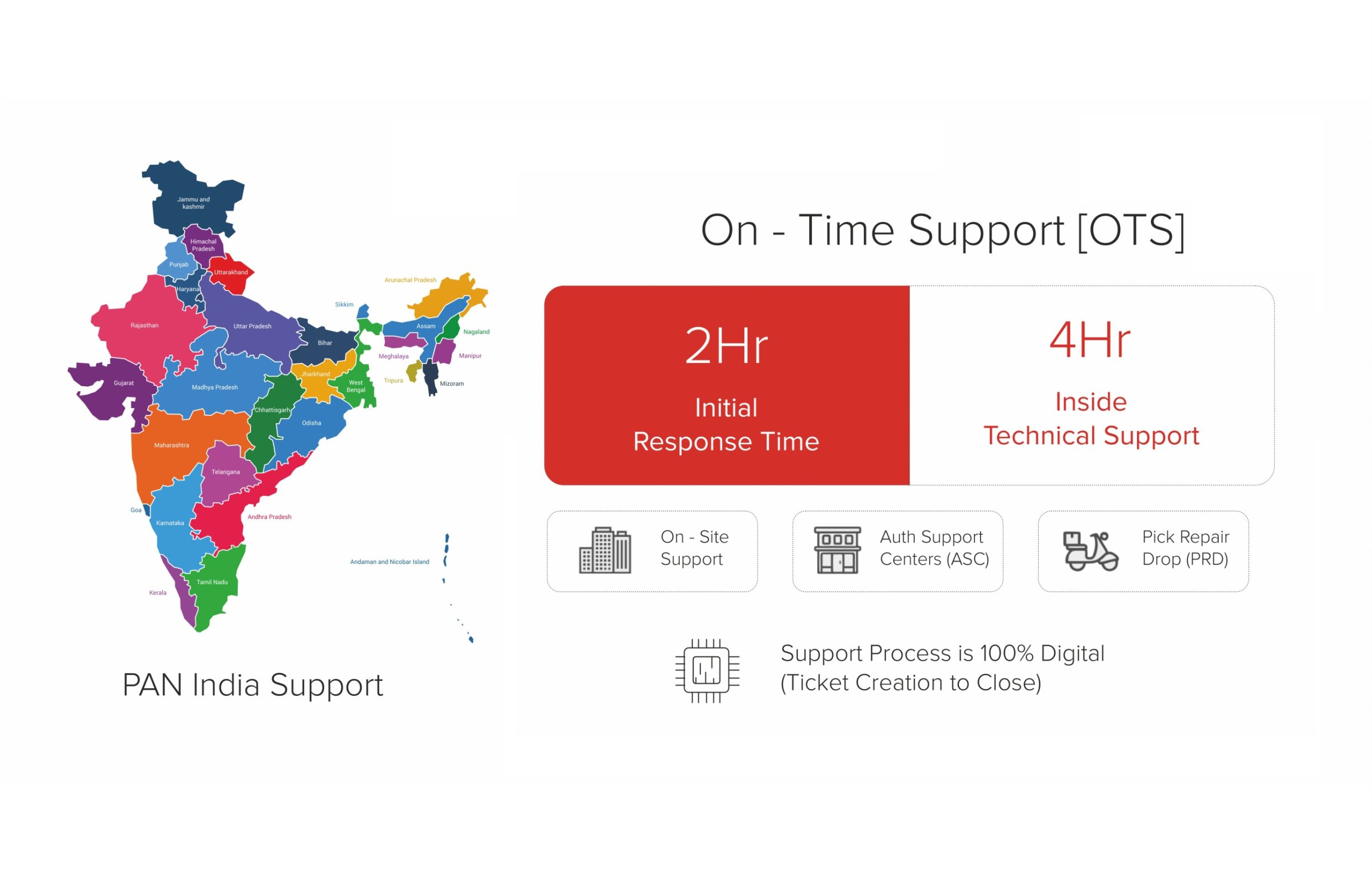

Talk to a Solutions ArchitectSLA-driven. Not ticket-driven.

Warranty. SLA. On-site service. Account management. Every commitment documented, every response time defined.

Download SLA CommitmentBuilt on process, not promises

ISO 9001. PLI 2.0. SOP-led manufacturing. The systems behind every device we ship.

Our Story

AI Single-Node Servers for Inference, GenAI & Team AI Workspaces

6 enterprise-grade single-node servers purpose-built for private GenAI copilots, production vision inference, and shared AI development sandboxes. Dual-socket CPUs, up to 1TB RAM, and GPU-accelerated compute for on-premises AI deployments that start fast and scale later.

On-Premises LLM Infrastructure for Secure Copilots & Document Q&A

Secure single-node servers for internal copilots, RAG over policies and SOPs, and private document Q&A — no data leaves your premises, no per-query API billing.

Production-Grade Servers for Multi-Camera Pipelines & Real-Time Analytics

GPU-accelerated inference servers for production vision AI — multi-camera pipelines, inspection/safety analytics, and stable low-latency deployments at scale.

Shared GPU Infrastructure for Team Experiments & AI Development

Shared GPU sandbox servers for team notebooks, experiments, evaluation pipelines, and controlled internal AI environments — your team's first dedicated AI development platform.

For AI Leaders & IT Infrastructure Heads: Your First On-Premises AI Server

Single-node servers are the fastest path to on-premises AI. Start with one server for your highest-priority use case — private copilot, vision inference, or team sandbox — and scale when ready.

Single-Node Simplicity

No cluster orchestration, no distributed training complexity. One server, one rack unit, one management interface. Deploy production AI services in days, not months.

Data Sovereignty Guaranteed

All AI processing stays on-premises. No data leaves your network — critical for regulated industries, government, healthcare, and IP-sensitive organizations.

Use-Case Optimized Configs

Purpose-built for three distinct workloads: GenAI/RAG copilots (VRAM-optimized), vision inference (throughput-optimized), and dev sandboxes (flexibility-optimized). No over-provisioning.

Scale-Ready Architecture

Start with a single node today. When workloads grow, scale to multi-node clusters or RDP Data Center infrastructure. Your investment carries forward — no forklift upgrades.

On-Premises AI vs Cloud API — Total Cost of Ownership

For organizations running daily AI workloads, on-premises servers deliver predictable costs, zero per-query billing, and complete data control at a fraction of long-term cloud spend.

Zero Per-Query API Billing

Fixed hardware investment eliminates variable per-token and per-query cloud API charges. Unlimited queries after deployment — cost doesn't scale with usage.

Complete Data Control

Training data, model weights, and inference logs never leave your premises. Eliminates compliance risk for DPDPA, industry regulations, and IP-sensitive workloads.

Always-On Availability

No cloud region outages, no API rate limits, no vendor throttling. Your AI services run 24/7 with IPMI/iDRAC remote management and 3-year on-site support.

Predictable Budget Planning

One-time CapEx with known support costs vs unpredictable monthly cloud bills. Finance teams can plan AI infrastructure spend accurately across fiscal years.

For SIs, VARs & Resellers: AI Server Channel Economics

AI single-node servers are the highest-ASP category with significant services attach. Help enterprise customers start their on-premises AI journey with turnkey infrastructure and professional deployment.

Premium Revenue per Deal

- Highest ASP category with premium per-unit margins

- Services attach: deployment, configuration, AI framework setup

- Deal registration for AI infrastructure opportunities

- Expansion revenue as customers scale from single-node to clusters

AI Infrastructure Sales Enablement

- Cloud-to-on-premises ROI calculators and TCO battle cards

- Use-case proposal templates (GenAI/Vision/Sandbox)

- GPU and VRAM sizing guides for common AI workloads

- Partner portal with AI server marketing and BoQ materials

Technical Support Infrastructure

- Pre-sales workload assessment and GPU sizing support

- PoC program with benchmark suites for customer evaluation

- Post-sales technical backup (3-year on-site warranty)

- AI framework pre-installation and deployment documentation

Win AI Infrastructure Deals with Make in India Credentials

Strengthen partner proposals with RDP's MeitY-recognized manufacturing and PLI 2.0 selection — proven differentiators for enterprise AI server procurement and government AI initiatives.

Data Sovereignty Compliance

Make in India servers with on-premises deployment satisfy data localization requirements for government AI initiatives, DPDPA compliance, and regulated industry mandates.

Enterprise RFP Differentiation

PLI 2.0 selection and MeitY recognition provide objective third-party validation for AI server procurement proposals and government tender participation.

Brand Trust & Credibility

14 years of manufacturing track record reduces perceived risk for enterprise teams evaluating domestic OEM AI server infrastructure alternatives.

Supply Chain Reliability

28,000 sq.ft local manufacturing facility ensures predictable delivery timelines for AI server deployments and phased infrastructure buildout projects.

Ready to Deploy On-Premises AI Infrastructure?

Request detailed BoQ with volume pricing for AI single-node server deployments, or join our channel partner program for premium AI infrastructure margins.