Part 1 of 3 · RDP AI Infrastructure Series

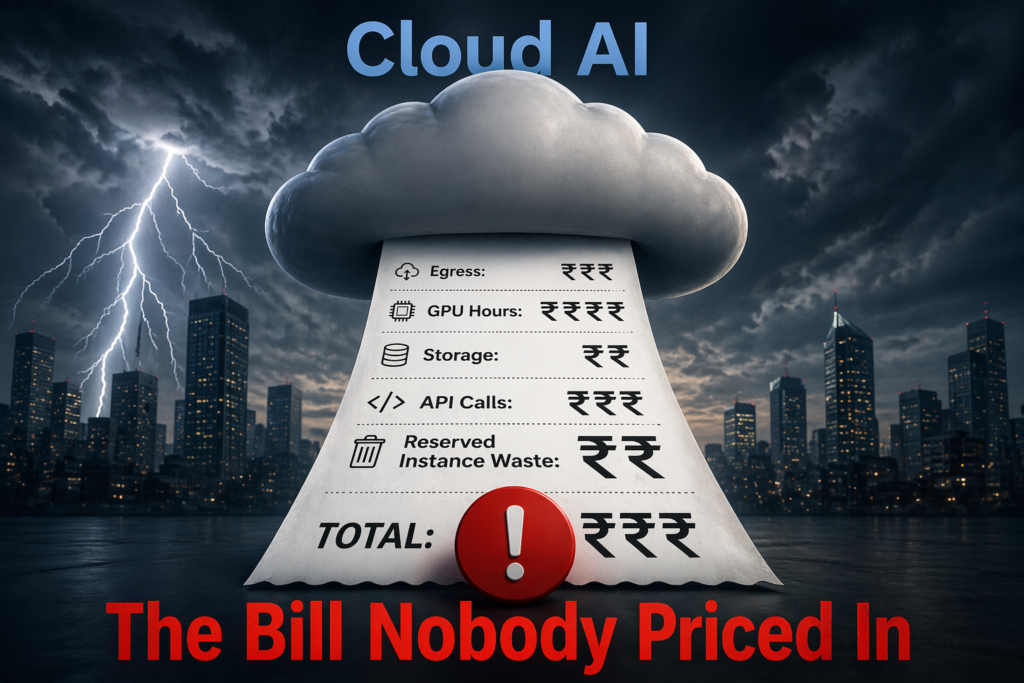

The CFO of a mid-sized Indian NBFC opened last month’s cloud bill and blinked twice. The AI team’s GPU spend had crossed ₹78 lakh for the quarter — up from ₹9 lakh a year ago. The workload hadn’t changed much. The models had.

This is the conversation happening in finance departments across India right now. It’s not anti-cloud. It’s a recalibration. The economics of cloud AI — sensible when models were small and workloads were episodic — have broken under the weight of production inference.

Welcome to the cloud AI cost crisis. And the quieter response to it: repatriation.

The Bill That Nobody Priced In

When Indian enterprises first adopted generative AI in 2023-24, the math looked simple. “Why buy a ₹40-lakh GPU server when you can rent an H100 by the hour?” That logic assumed three things: utilization would be low, workloads would be experimental, and costs would scale predictably.

None of those assumptions survived contact with production.

Here’s what’s actually happening on the ground in 2026:

GPU hourly rates haven’t come down the way CPU prices did. An H100 on AWS, Azure, or GCP runs roughly $3.50–$5 per hour on-demand. An 8-GPU instance — the minimum for serious training or high-throughput inference — costs $28–$40/hour. Running it 24/7 for a month? Roughly ₹17–24 lakh. For a workload that, six months in, is running at 60–80% utilization.

Inference is where the math breaks. Training is episodic — you spin up, train, spin down. Inference runs continuously in production. A customer support bot, a document classifier, a fraud detection pipeline — these don’t take weekends off. The cloud meter doesn’t either.

The rupee isn’t helping. Compute is billed in USD. At ₹84–85 to the dollar, every hour of GPU is materially more expensive in rupee terms than it was in 2022. That’s pure FX pain, compounding monthly.

Egress, storage, and “reserved but unused” capacity. The list price you compared was never the real price. Data egress from cloud providers starts at ~₹8/GB at scale. Object storage for training datasets runs into lakhs per month. Reserved instance commitments locked in during 2024’s GPU shortage now sit partially idle but still bill every month. The number you saw last month has all three baked in.

Add it up. For an Indian enterprise running meaningful AI in production — not demos, production — the annual cloud GPU bill is typically ₹1.5–4 crore. And growing.

The TCO Math, Done Honestly

Let’s run the numbers. Take a realistic mid-enterprise AI workload: 4 × H100-class GPUs running production inference with occasional fine-tuning.

Cloud route (3 years, on-demand blended with ~30% reservation)

- GPU compute: ~₹4.2 crore

- Storage + egress + networking: ~₹60 lakh

- Support and monitoring tooling: ~₹25 lakh

- 3-year total: ~₹5 crore

On-prem route (3 years, RDP-class AI-POD)

- Hardware capex (4× H100 server, networking, storage, rack): ~₹1.6–1.9 crore

- Power and cooling (India tariff, 10 kW continuous): ~₹36 lakh

- Facilities and physical security: ~₹15 lakh

- Staff (0.3 FTE infra engineer attributable): ~₹18 lakh

- Software stack + monitoring: ~₹12 lakh

- 3-year total: ~₹2.4–2.7 crore

Delta: ₹2.3–2.6 crore over 3 years. That’s 48–52% lower TCO.

These numbers vary — we’re speaking in ranges, not point estimates. But the shape of the answer doesn’t change based on the rounding:

- Break-even typically lands between 12 and 18 months of continuous workload.

- On-prem wins decisively above ~40% sustained GPU utilization.

- The gap widens in years 4–5, when cloud costs keep accumulating and on-prem hardware is written down.

The counter-argument — “but we need bursting capacity” — is real, but manageable. Most Indian enterprises we talk to need ~85–90% of their AI compute as steady-state, with only 10–15% as burst. A hybrid model (on-prem for steady-state, cloud for burst) captures most of the savings without sacrificing flexibility.

Why 2026 Is the Inflection Point

Three things have changed that make on-prem viable now in ways it wasn’t eighteen months ago.

1. Hardware availability is no longer the bottleneck. Through 2024, GPUs were hoarded by hyperscalers. Lead times of 9–12 months made on-prem impractical. That has reversed. H100, H200, and early B200 supply has normalized. Indian enterprises can now actually get what they order in 8–16 weeks.

2. Data residency isn’t a suggestion anymore. The Digital Personal Data Protection Act is in force. Sectoral mandates — RBI for financial services, IRDAI for insurance, MeitY for government-adjacent enterprises — are pushing sensitive workloads out of cross-border cloud regions. For an Indian bank running retrieval-augmented generation over customer data, on-prem isn’t a cost conversation anymore. It’s a compliance one.

3. The IndiaAI Mission is changing procurement incentives. With ₹10,000+ crore committed to indigenous AI compute and Make-in-India hardware getting procurement preference under BIS and PLI frameworks, the cost of not building sovereign AI infrastructure is rising. For public sector units and government-adjacent enterprises, domestic on-prem isn’t just cheaper — it unlocks procurement pathways that international cloud can’t.

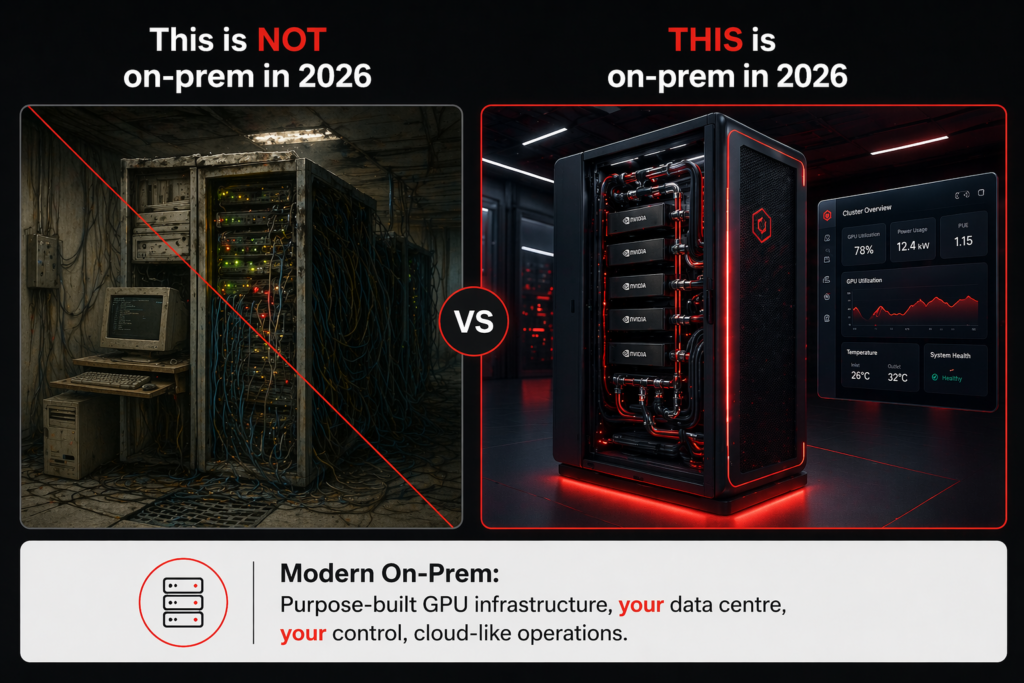

What “On-Prem” Actually Means in 2026

“Run it in a server room” is not what we’re talking about. The on-prem AI infrastructure being deployed across Indian enterprises today looks like this:

Edge AI (1–2 GPU) — inference at a branch, factory, or remote site. Ideal for computer vision on a production line, document processing at a branch, real-time analytics at edge. Small footprint, minimal cooling. Starts around ₹8–15 lakh.

Departmental AI-POD (2–8 GPU) — rack-mounted cluster for a team, department, or mid-size workload. Handles fine-tuning of domain-specific models, production inference at moderate scale, RAG over corporate knowledge bases. This is where most Indian mid-enterprises are landing. Typical spend ₹60 lakh – ₹2 crore.

AI Factory (16–128+ GPU) — rack-scale, liquid-cooled, purpose-built. This is the shift happening at the top of the market — banks, telcos, large PSUs, research institutions. It’s a capital investment, but with a 3–5 year runway and sovereignty built in.

RDP builds across all three tiers. The AI-POD architecture is our answer to departmental and mid-size deployments. The AI Factory product is what we deploy at rack scale. Both are designed, manufactured, and supported in India — which matters for warranty, lead time, and long-term parts availability in ways that become obvious around year three of any deployment.

Questions Every CIO Should Be Asking in Q2 2026

Before the next cloud bill lands:

- What is my actual GPU utilization on cloud? If it’s consistently above 40%, you’re funding your cloud provider’s margins, not your own.

- What percent of my workload is inference versus training? Inference-heavy workloads benefit most from on-prem.

- How much sensitive data is leaving my perimeter to run through a model? Your compliance team may already have an opinion.

- What’s my three-year AI compute forecast? If it’s flat or declining, cloud makes sense. If it’s growing, every month of delay compounds the bill.

- Am I paying for reserved capacity I’m not using? Many Indian enterprises locked into 2024 reservations during the shortage. Those reservations are quietly becoming a drag.

The Indian Angle: Sovereignty as a Cost Lever

For Indian enterprises specifically, the on-prem conversation isn’t only about TCO. It’s about:

- Data sovereignty — your customer data, your IP, your training corpus stay in your building, under your jurisdiction.

- FX insulation — rupee-denominated capex insulates you from dollar compute pricing.

- Procurement advantages — Make-in-India hardware carries preference in government, PSU, and BFSI tenders.

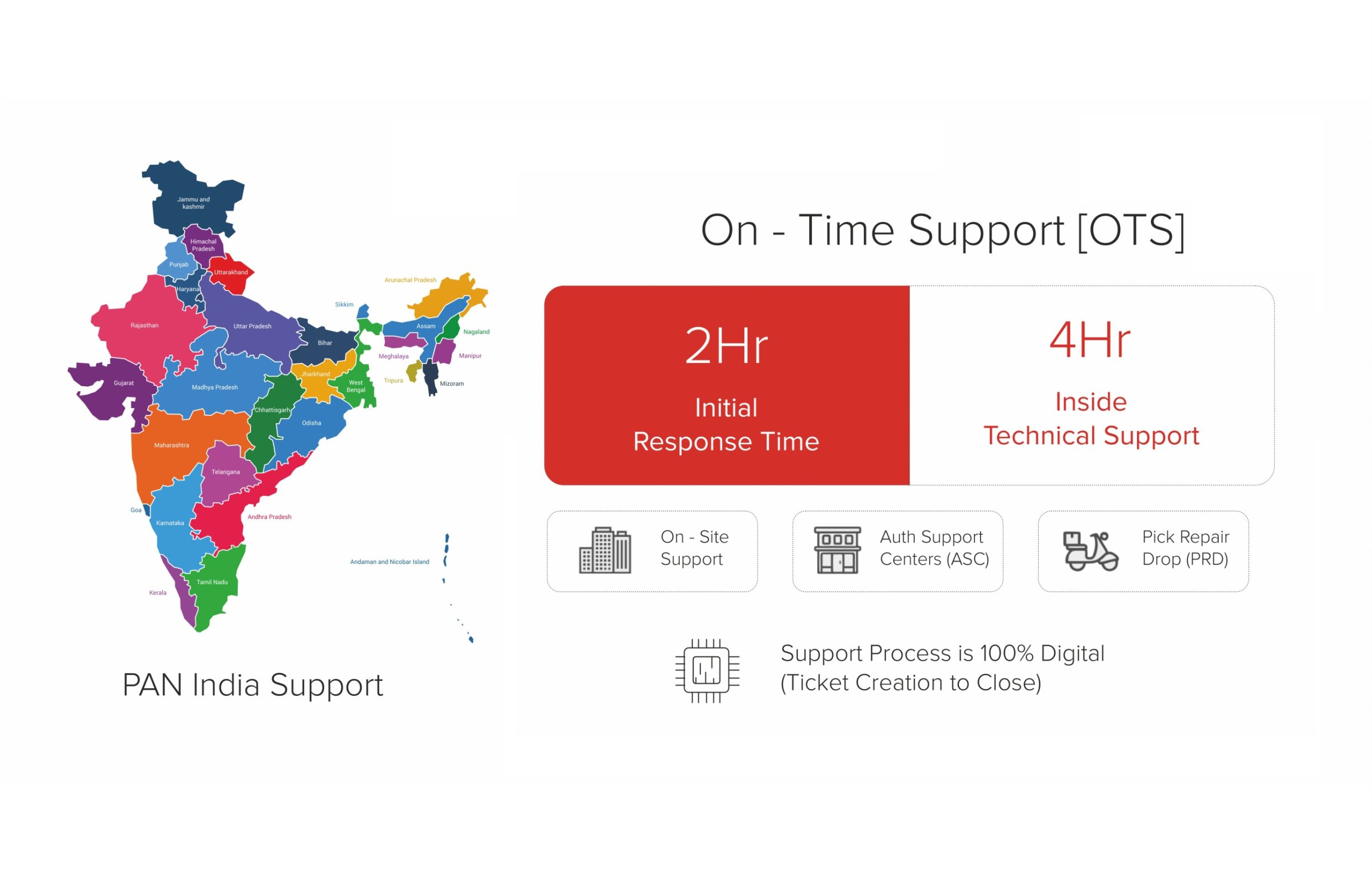

- Support latency — India-based warranty, spares, and engineering teams respond in hours, not days. Hyperscaler support tickets sit in global queues.

These aren’t marketing bullets. At RDP, we design, manufacture, and support AI infrastructure in India — which is the quiet reason our deployments tend to have lower year-3 friction than imported alternatives. Reliability is Our Product.

What’s Next

This was Part 1 of our AI Infrastructure series — the why behind the shift.

In Part 2, we’ll get tactical: how to actually design an AI Factory for your organization — what questions to ask, what architecture to consider, and how to phase the deployment so you don’t overbuild or underbuild.

In Part 3, we’ll tackle the policy and sovereignty angle — how Indian enterprises and government bodies are thinking about sovereign AI as a strategic imperative, not just a cost line.

If you’re in the middle of this decision — or you’re about to open your next cloud bill and want a second opinion — speak with our AI Infrastructure team. We do honest cost assessments, not sales pitches.

Read the full series

- Part 1: The Real Cost of Cloud AI (you are here)

- Part 2: Building Your AI Factory in India — a CIO’s playbook for 2026.

- Part 3: Sovereign AI Starts with Sovereign Compute — the policy and sovereignty angle.

Table: Cloud AI vs On-Premises AI — 3-Year TCO Comparison for a 100-GPU Cluster

| Cost / Factor | Public Cloud (AWS/Azure/GCP — India region) | On-Premises (Owned) | On-Premises (Co-lo / Managed) |

|---|---|---|---|

| Compute Cost (3 yr) | ₹18–28 cr (A100 on-demand/reserved blended) | ₹12–16 cr (CapEx, hardware + power) | ₹14–19 cr (hardware + co-lo fee) |

| Egress / Data Transfer | ₹0.08–0.12 per GB; large models = ₹1–3 cr/yr | ₹0 — data stays on-premises | ₹0 internal; nominal ISP cost |

| Inference Latency | 15–80 ms (internet hop + shared infra) | 1–5 ms (local network) | 3–10 ms (co-lo uplink) |

| Data Residency | Logical guarantee only; physically outside your perimeter | Full physical and legal control | Physical control; legal SLA with co-lo provider |

| Model Customisation | Limited — vendor API constraints apply | Full: fine-tune, RAG, quantise freely | Full — hardware is yours |

| Scaling Flexibility | Elastic up/down; costs spike with demand | Fixed capacity; over-provisioning risk | Moderate — can add nodes via co-lo agreement |

| Regulatory Compliance (DPDP, SEBI, IRDAI) | Requires additional audit; cloud provider attestation needed | Simplest to demonstrate compliance | Achievable with right SLA and audit rights |

RDP Technologies Limited designs, manufactures, and supports AI infrastructure — from edge compute to rack-scale AI factories — for Indian enterprises, government bodies, and research institutions. Make in India. Built for an AI-Ready India. Reliability is Our Product.