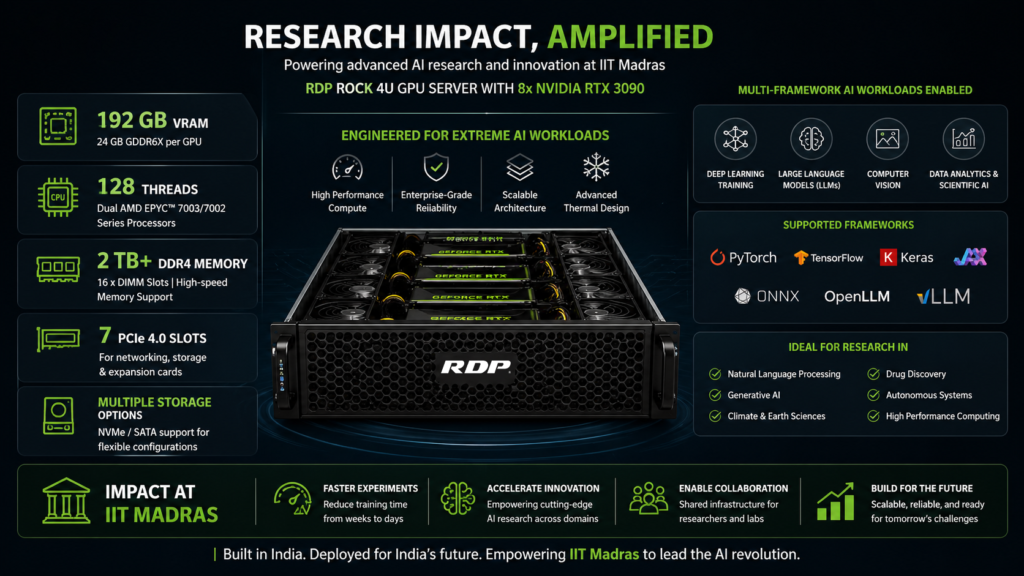

Eight NVIDIA RTX 3090 GPUs. 192 GB of VRAM. Dual AMD EPYC processors with 128 threads. Half a terabyte of ECC RAM. This is not a spec sheet from a Silicon Valley hyperscaler. This is a single server — designed, assembled, and delivered by RDP from Hyderabad to IIT Madras.

When one of India’s most demanding research institutions needed GPU-dense compute infrastructure for AI, deep learning, and scientific simulation workloads, it chose an Indian OEM. Here is what that decision looked like in practice.

The Requirement: Research-Grade GPU Compute at Scale

Indian Institute of Technology Madras is consistently ranked among India’s top research universities. Its departments run workloads that push hardware to the limit: large language model training, computational fluid dynamics, medical imaging analysis, robotics vision systems, and multi-physics simulations that can run for days.

The institution needed a single high-density compute platform capable of serving multiple research groups simultaneously. The requirements were specific:

- Maximum GPU density. AI and deep learning workloads are GPU-bound. The platform needed enough parallel compute to train large neural networks without queuing researchers behind each other.

- Enterprise-grade memory. Scientific simulations and large dataset operations require error-correcting memory — a single bit-flip during a week-long simulation run can invalidate the entire result.

- High-throughput storage. Training data, model checkpoints, and simulation outputs generate terabytes. The storage tier needed to keep pace with GPU throughput, not bottleneck it.

- Government procurement compliance. As a Ministry of Education institution, procurement had to align with government norms and Make in India preferences.

The Solution: RDP ROCK Series 4U GPU Server

RDP responded with a purpose-engineered 4U rackmount GPU server from its ROCK series — designed and assembled at the 28,000 sq. ft. ISO 9001-certified facility in Hyderabad. Every unit undergoes cloud-synced quality control benchmarks and PassMark validation before dispatch.

| Component | Specification |

|---|---|

| Processor | 2x AMD EPYC 7543 — 64 cores / 128 threads total, up to 3.7 GHz |

| Cache | 256 MB L3 per CPU (512 MB total) |

| Memory | 512 GB DDR4 ECC RAM |

| GPU | 8x NVIDIA RTX 3090 24 GB Turbo — 192 GB total VRAM |

| Boot storage | 2x 250 GB SATA SSD |

| Data storage | 8x 1.92 TB Micron 5300 Max Enterprise SSD (15.36 TB raw) |

| RAID | 3108-8i Enterprise RAID controller |

| Chassis | 4U rackmount HPC GPU server |

| Power supply | 3000W redundant PSU |

| Management | Dedicated management port (IPMI/BMC) |

| Warranty | 3 years onsite |

The configuration is purposeful at every layer. The dual EPYC 7543 processors provide 128 threads of CPU compute — enough to keep eight GPUs fed with data without becoming a preprocessing bottleneck. In mixed AI/HPC workloads, CPU starvation is the silent performance killer. 128 threads eliminates it.

512 GB of ECC RAM serves two functions. First, it provides the working memory for large dataset preprocessing, model parameter staging, and simulation state — operations that happen on CPU before GPU acceleration kicks in. Second, ECC ensures data integrity across multi-day training runs. When a neural network trains for 72 hours, a single uncorrected memory error can corrupt gradients and waste the entire run. ECC catches and corrects those errors silently.

The storage architecture pairs fast boot drives with 15.36 TB of enterprise-grade Micron 5300 Max SSDs — a drive rated for 5 full drive-writes per day, designed for write-intensive workloads. AI training checkpoints, intermediate outputs, and large dataset staging demand sustained write throughput that consumer SSDs cannot sustain.

Research Applications Enabled

A server with 192 GB of GPU memory and 128 CPU threads is not a single-purpose machine. At IIT Madras, this platform supports research groups across multiple departments simultaneously:

| Research domain | Workload | Why it needs this hardware |

|---|---|---|

| AI and deep learning | LLM training, neural network development, NLP | 8 GPUs enable data-parallel training across large models without splitting across nodes |

| HPC and scientific computing | Parallel simulations, numerical methods | 128 CPU threads + 512 GB RAM handle large-scale parallel computation |

| Computational fluid dynamics | Aerospace and thermal engineering simulation | GPU-accelerated solvers reduce simulation time from days to hours |

| Medical imaging | MRI, CT scan analysis, genomics | GPU inference on large imaging datasets at research-grade throughput |

| Computer vision | Object detection, satellite imagery, surveillance | Training vision models on high-resolution image datasets requires sustained GPU memory |

| Robotics | Vision and sensor fusion, autonomous systems | Real-time inference testing and simulation of autonomous navigation models |

The multi-user capability is critical. A single 8-GPU server, properly managed, can serve 4-6 concurrent research projects using GPU scheduling and containerised environments. For a university, that means fewer procurement cycles, less rack space, and a higher utilisation rate per rupee spent on compute.

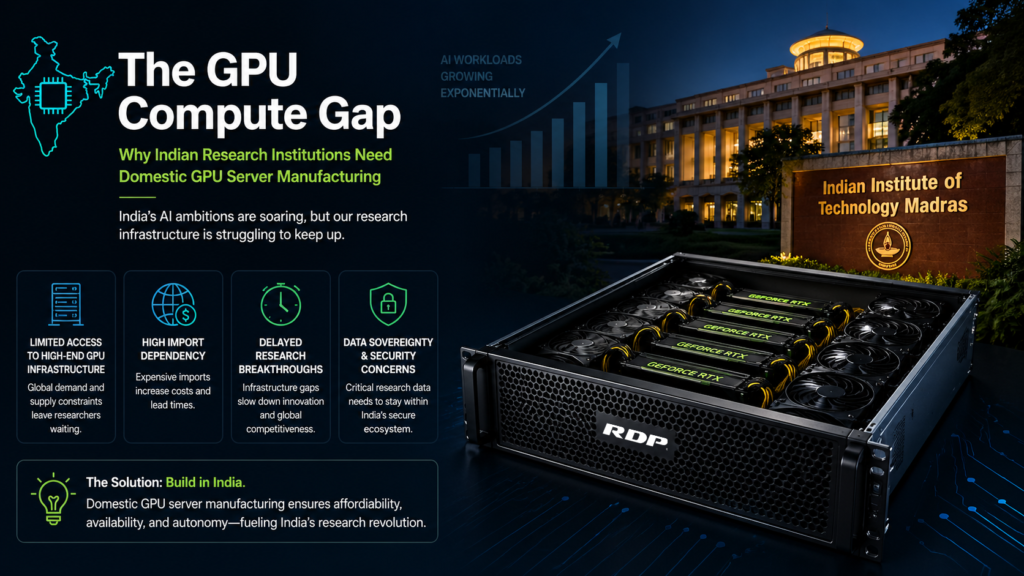

Why an Indian OEM for Research GPU Infrastructure

GPU server procurement in India has traditionally defaulted to global OEMs or system integrators importing pre-configured boxes. IIT Madras chose differently — and the reasons are structural.

Custom configuration. Research GPU servers are not commodity products. The specific combination of EPYC processors, RTX 3090 Turbo-variant GPUs (blower-style cooling optimised for multi-GPU density), enterprise RAID, and 3000W redundant power required engineering at the system level — not just component assembly. RDP’s engineering team configured the thermal, power, and PCIe lane allocation for this specific 8-GPU topology.

Government procurement alignment. As a Make in India OEM with 6,000+ SKUs on GeM, RDP’s procurement pathway is frictionless for government-funded institutions. No import delays, no customs complications, no intermediary channel markups.

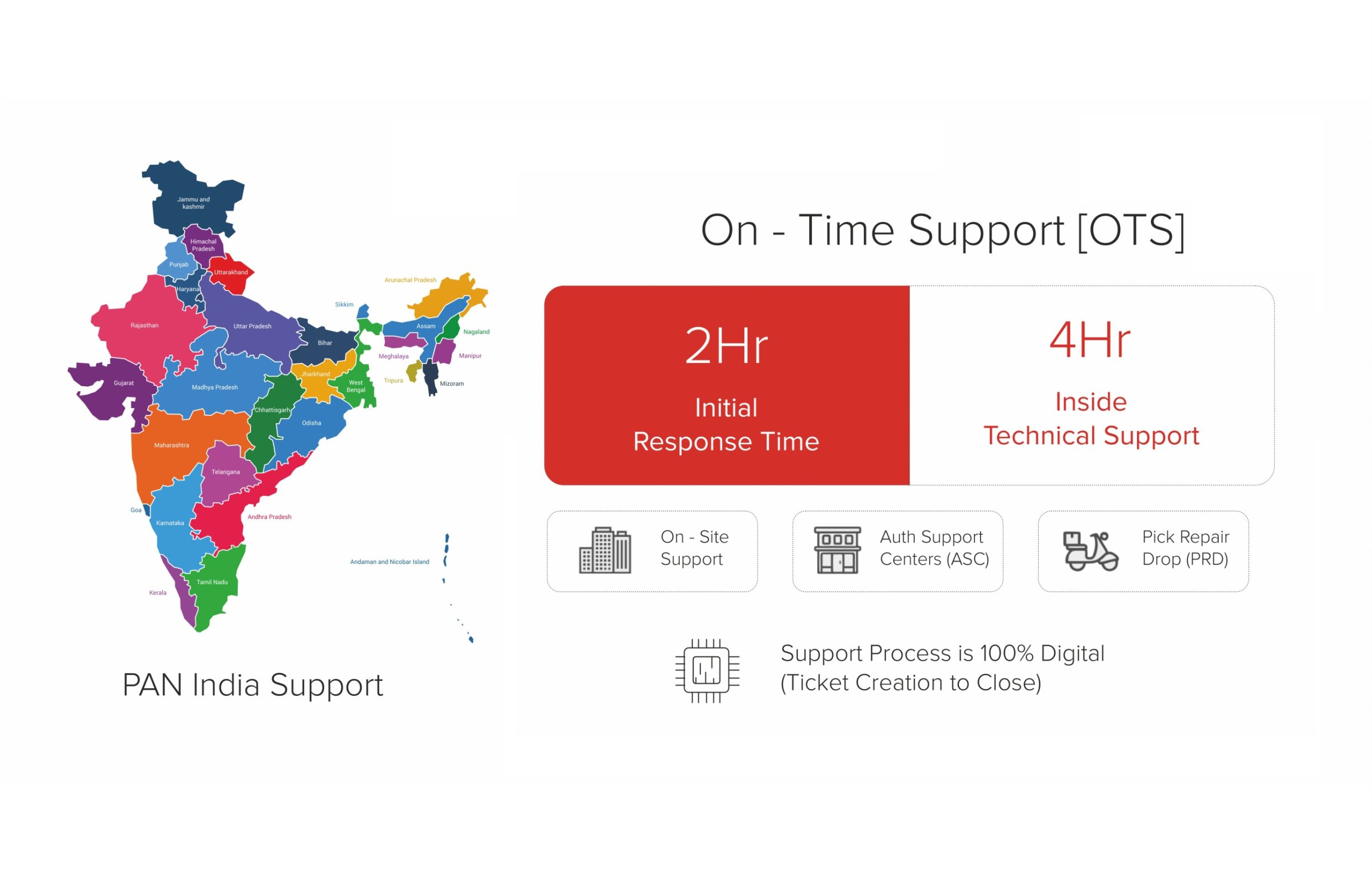

Onsite support. When a 3000W GPU server in a university data centre develops a power supply issue or a drive fails in the RAID array, the support response needs to be local and fast. RDP’s 3-year onsite warranty means an engineer — not a shipping label — responds to the problem.

The Outcome

| Outcome | Impact |

|---|---|

| GPU compute capacity | 192 GB VRAM across 8 GPUs — sufficient for large-scale model training |

| Multi-user research | 4-6 concurrent research projects supported via GPU scheduling |

| HPC capability | 128 CPU threads + 512 GB ECC RAM for simulation and preprocessing |

| Storage throughput | 15.36 TB enterprise SSD tier for sustained AI training workloads |

| Procurement | GeM-compliant, Make in India, direct OEM delivery |

This deployment positions IIT Madras with compute infrastructure that matches the ambition of its research programme. For an institution pushing the boundaries of AI, HPC, and computational science, the hardware cannot be the constraint. With RDP’s ROCK series GPU server, it is not.

From Lab to AI Factory

This project sits within a broader pattern. RDP also delivered a GPU workstation to CSIR-IICT for AI and machine learning research — and its AI factory infrastructure serves enterprises scaling from single-server deployments to full rack-scale GPU clusters. The IIT Madras deployment proves the same ROCK platform that serves enterprise AI serves academic AI with equal reliability.

For any Indian university, research lab, or Ministry of Education institution evaluating GPU compute infrastructure — the question is no longer whether an Indian OEM can deliver research-grade AI hardware. RDP has. Make in India. Built for an AI-Ready India. Reliability is Our Product.

Evaluating GPU compute infrastructure for your research institution? Talk to us.