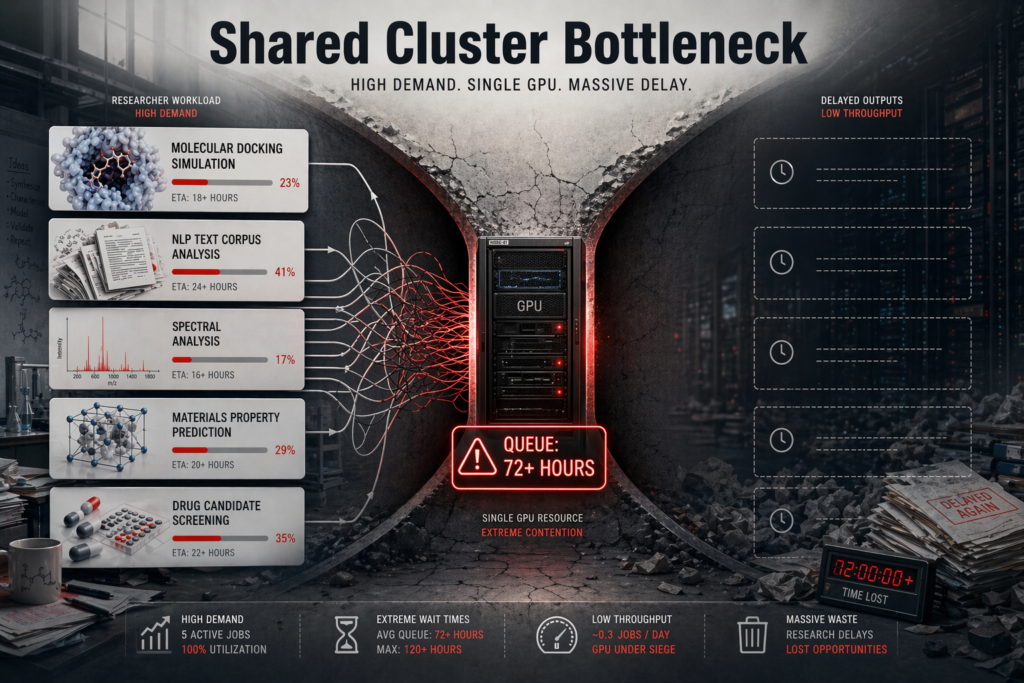

For most AI research groups in India, the slowest part of the workflow is not training. It is waiting to train.

A shared GPU cluster schedules jobs in a queue. A workstation runs them now. When the workload is one researcher’s model, run many times a week, the queue time often exceeds the compute time. The answer is usually the one procurement dismisses first: a production-grade, single-user GPU workstation that lives under the researcher’s desk.

That is the pattern we saw at a research group inside CSIR-IICT — the Indian Institute of Chemical Technology, Hyderabad — where AI/ML engineers and research scientists had hit the limits of a shared compute environment and needed a per-user platform for both deep learning and computational chemistry.

The customer

CSIR-IICT is one of India’s premier chemical-sciences research institutes, part of the Council of Scientific and Industrial Research network. The group we deployed for sits inside Advanced Research & Computational Sciences — a mixed team of AI/ML engineers and research scientists working across molecular modelling, drug discovery, catalysis, and AI-accelerated chemistry.

Work of that kind mixes long-running simulations with rapid iteration cycles. It is notoriously hard to serve well from a single shared cluster, and the group had the scars to prove it.

The problem

The team described four structural bottlenecks.

Deep learning models were being trained on datasets exceeding one million records, and the existing systems did not have the GPU memory or compute throughput to run them at a sensible cadence. Training that should have taken hours was stretching into days on underpowered hardware. That did not just add wall-clock time — it changed the kind of experiments researchers were willing to try, which is a quieter but more expensive problem.

Computational chemistry workloads, particularly COSMO-based simulations, needed sustained RAM capacity and stable thermal behaviour that the existing desktops could not deliver. And research-grade workloads run for days, not minutes. The platform needed to hold stable utilisation for long durations without throttling or hard failures.

In short: the group needed a workstation that behaved like a server — dense, reliable, engineered for compute — without taking on the operational burden of a server.

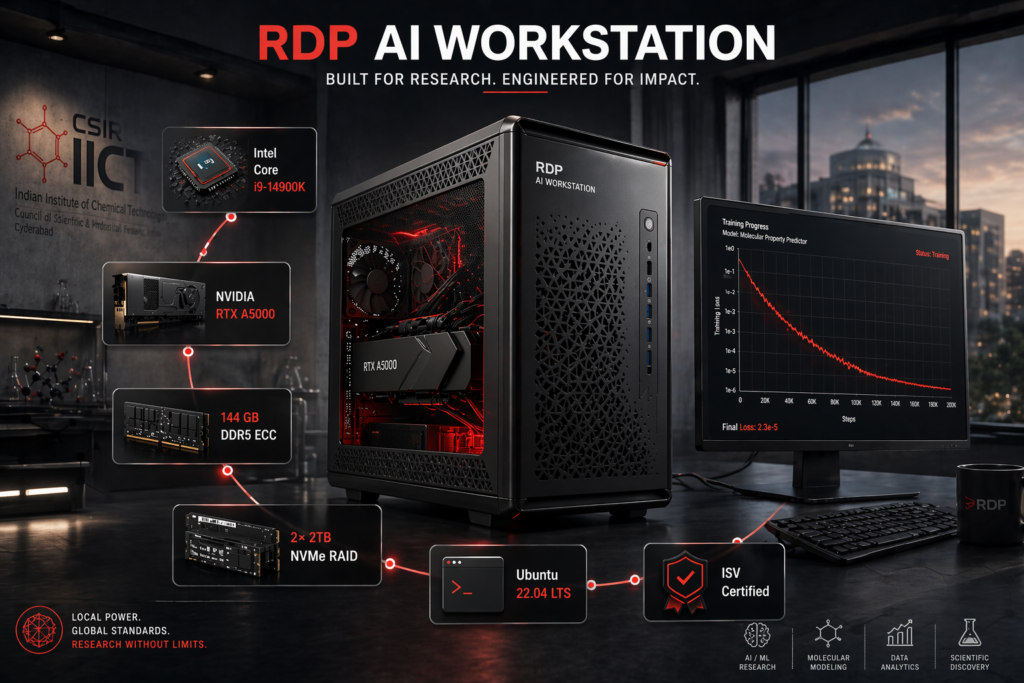

The deployment

RDP proposed and delivered a single, validated AI workstation configured to the workload rather than to a catalogue SKU. Intel Core i9-14900K, NVIDIA RTX A5000 24 GB professional GPU, 144 GB of DDR5 memory at 5600 MHz, 2 TB NVMe for active training data and checkpoints, 2 TB of enterprise HDD for the long-tail scientific corpus, a 1000 W high-efficiency PSU, and a server-grade tower chassis engineered for thermal headroom.

This is a deliberately asymmetric build. CPU, GPU, and memory are each sized for the heaviest sub-workload the researcher will run, rather than for an averaged profile. Storage is tiered. Power and thermals are specified for sustained peak load, not burst load.

Each subsystem was specified against a specific technical constraint. The high-core-count CPU accelerates data preprocessing, multi-threaded pipelines, and CPU-bound chemistry simulations. The 24 GB of professional GPU VRAM enables large-model training, higher batch sizes, and long-running GPU compute without memory thrashing. 144 GB of DDR5 handles large in-memory datasets and high-footprint simulation models. NVMe primary storage removes the disk-IO ceiling on training data loading. The server-grade chassis and 1000 W PSU hold stable power and airflow under simultaneous peak CPU and GPU utilisation.

The configuration was designed so that no single subsystem becomes the bottleneck under a realistic research workload. That is the difference between a gaming PC with a professional GPU and a research workstation.

The outcome

In production, the platform sustained roughly 75% GPU utilisation during training — the practical ceiling for mixed deep-learning and chemistry workloads on a single-user rig. System stability held under prolonged compute load. No reported bottlenecks. User satisfaction, in the researchers’ own words: “Performance is Awesome.”

Beyond the numbers, the practical outcomes the team reported were the ones that matter for research velocity. A significant reduction in model training time, enabling more iterations per week. Faster experimentation cycles — the decision to try a new model variant no longer required a queue calculation. Improved throughput on computational chemistry simulations. Server-grade compute delivered in a workstation form factor, reducing dependency on shared infrastructure. And a lower total cost of ownership than an equivalent single-user GPU server deployment.

What this unlocks

The pattern is transferable. Any Indian research group running AI/ML on medium-to-large datasets, per-researcher compute inside an academic institution, enterprise data-science teams with sustained training workloads, or computational chemistry and bioinformatics groups running high-memory simulations will see the same leverage.

The decision logic is simple. If one researcher’s workload runs often enough that queue time on a shared cluster starts to dominate total turnaround, a dedicated workstation of this class is usually both faster and cheaper. Shared clusters still win where jobs span multiple GPUs or where the utilisation of a dedicated machine would be low.

The mistake we see most often is defaulting to a shared server because that is what the previous procurement cycle did. Workload shape should drive the architecture, not the other way round — the same point we made in our AI Factory playbook for 2026. The IICT deployment is the same principle at a single-seat scale: specify the platform against the work, not against the org chart.