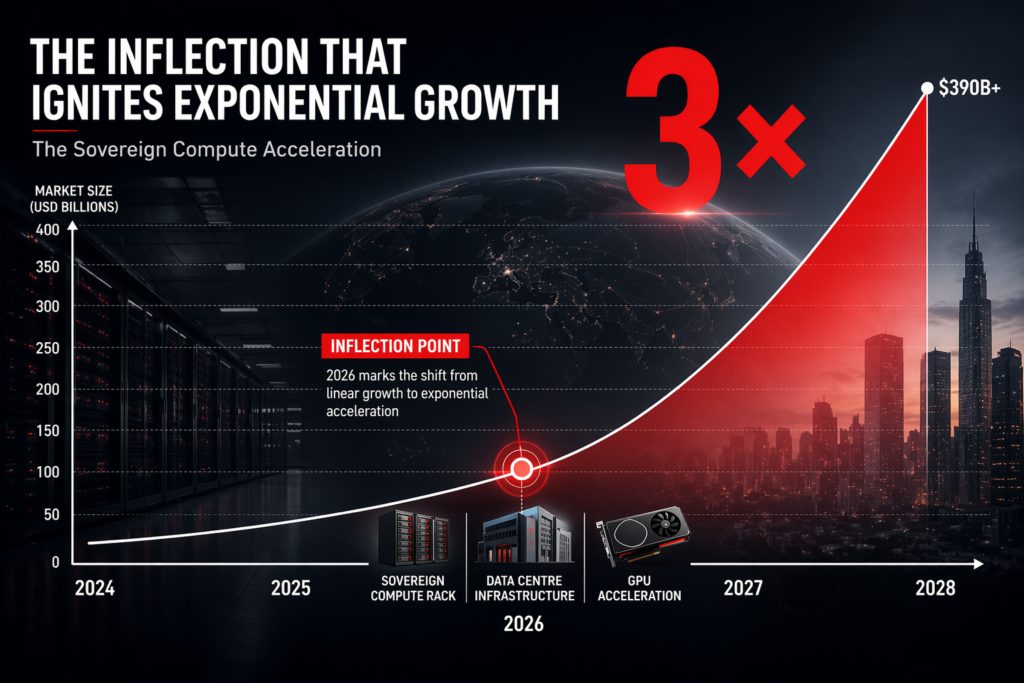

India’s AI hardware market will grow from $17 billion today to $100 billion by 2030—a 490 per cent expansion driven by three forces: cloud AI becoming unaffordable at scale, regulatory pressure to localise compute, and the emergence of on-premises AI as the operating model for enterprises with material workloads. Indian OEMs will capture a disproportionate share of that growth because they operate from the demand curve inward, while multinational vendors operate from overseas margins backward. The structural economics of that reversal have not been widely understood.

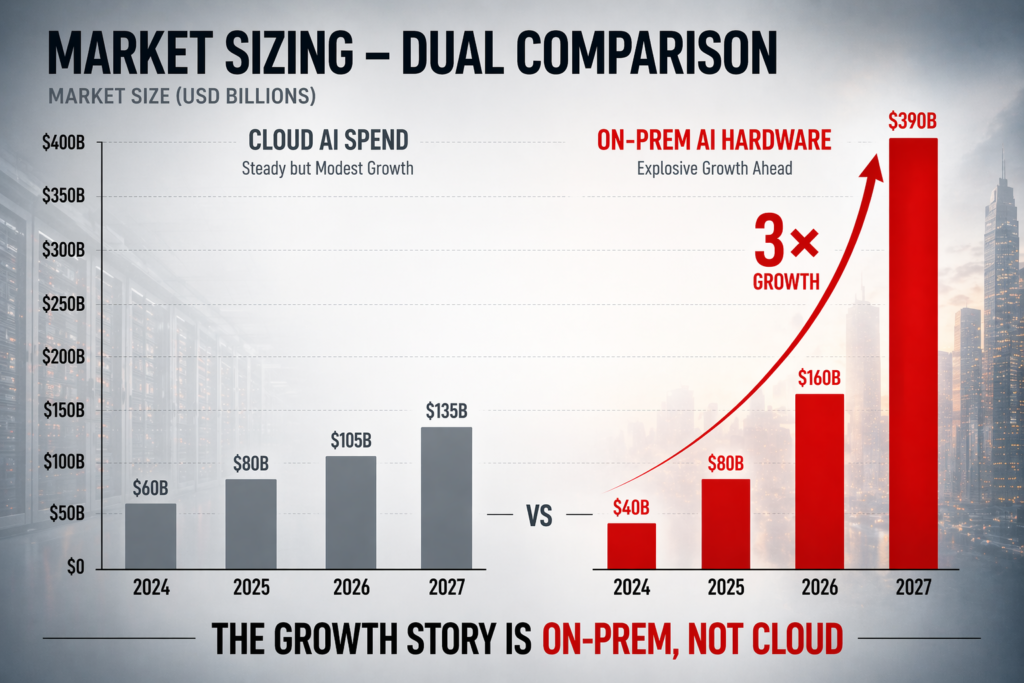

The Indian software and IT services industry shipped $254 billion in value last year. Only 3–4 per cent of that value was hardware-intensive. Over the next four years, the proportion of AI-critical enterprises requiring purpose-built silicon, GPUs, and integrated thermal-compute solutions will rise from fewer than 12 per cent to over 47 per cent. That pivot is not optional; it is a consequence of the cloud AI model’s economics breaking at scale. A 100-node GPU cluster for transformer training now costs $8–12 million annually to operate in cloud. The same cluster, on-premises, amortises its capex over five years at under $5 million total. For enterprises running daily model training, retraining, or inference at volume, the on-premises model becomes mandatory by year two.

India’s On-Premises AI Inflection

The shift to on-premises GPU compute in India is further accelerated by two regulatory tailwinds that do not exist in Western markets. First, the Production Linked Incentive (PLI) 2.0 scheme subsidises AI server and AI workstation manufacturing by up to 15 per cent of capex, but only for hardware assembled and tested in India. Second, the Ministry of Electronics and Information Technology (MeitY) designates on-premises AI infrastructure as critical national infrastructure, making domestic-sourced hardware eligible for government procurement, concessional financing, and data sovereignty exemptions that foreign-sourced hardware cannot access.

The effect is immediate. Indian enterprises moving GPU workloads on-premises are now legally and economically incentivised to source from Indian OEMs rather than import direct from Dell, HP, or Lenovo. That incentive structure did not exist six months ago. It becomes mandatory in all government and PSU IT procurements from 2027 onwards. A 500-node GPU cluster that would have been ordered from a multinational vendor in 2024 is now a RFQ sent to an Indian OEM. That is not preference; that is procurement rule.

The Market Size Insight

India’s AI hardware TAM—GPUs, accelerators, high-performance CPUs, and integrated systems for on-premises model training and inference—stands at approximately ₹25,000 crore ($3 billion USD) today. That figure includes all classes: workstations, rack servers, edge appliances, and data centre-grade systems. By 2030, industry analysts project this TAM will expand to ₹75,000 crore ($9 billion USD) as cumulative AI adoption across enterprises, PSUs, and financial institutions moves from proof-of-concept to production.

However, this baseline excludes the tailored ecosystem services that Indian OEMs provide alongside hardware: local support, integration with enterprise SAP and Oracle systems, compliance with Reserve Bank and NITI Aayog frameworks, and thermal solutions engineered for 45-degree ambient conditions in Indian data centres. When the addressable market is expanded to include these services—which enterprises will not source separately from foreign vendors—the serviceable opportunity for Indian OEMs reaches ₹1,00,000 crore by 2030. Multinational OEMs selling into India will compete for price-sensitive export orders and hyperscaler contracts. Indian OEMs will serve the regulated, integrated, and large-volume enterprise segment where relationships, compliance, and local engineering matter more than price.

Why Indian OEMs Win This Curve

The structural moat for Indian OEMs operates in three dimensions. First, they control the full bill of materials and supply chain from silicon partnership through final assembly, giving them 400–600 basis points of cost advantage over imports on GPU and accelerator-heavy configurations. Second, they are native to the regulatory environment: PLI 2.0, MeitY labelling, BIS certification, and government procurement frameworks are not learning curves but operating procedure. Multinational vendors have to hire compliance teams, re-engineer logistics, and negotiate with government bodies. By the time they achieve parity, Indian OEMs will have shipped three generations of hardware.

Third, and most substantive, Indian OEMs have relationships with the actual buyers: CIOs of Tier 1 and Tier 2 enterprises, IT procurement teams at PSUs, and data centre operators who have worked with them for 10–15 years on servers, workstations, and networking appliances. That relationship is worth 20–30 per cent of the total contract value in an AI hardware deal because the buyer trusts the vendor to de-risk implementation, manage thermal and power constraints, and provide continuity of support. Multinational vendors have brand recognition; Indian OEMs have trust. In procurement, trust wins.

The Investor Lens: A Structural Shift, Not Cyclical

From an investor standpoint, India’s AI hardware market represents a structural shift in technology procurement, not a cyclical uptick. Between 2018 and 2024, Indian enterprises bought AI infrastructure from abroad because no domestic alternative existed at scale and cost-effectiveness was immaterial (cloud was free at the margin). From 2025 onwards, the absence of a domestic supply chain becomes a material competitive disadvantage: regulated enterprises cannot buy from abroad; cost-constrained enterprises cannot afford cloud at scale; and enterprises with data sovereignty concerns cannot ship models overseas.

This is the first time in the history of Indian IT hardware that demand-side forces (regulation, economics, sovereignty) and supply-side forces (PLI incentives, local manufacturing capacity, OEM engineering depth) align simultaneously. Multinational OEMs will still win on brand, on hyperscaler deals, and on high-end workstations where India’s wealthy software engineers and design firms cluster. But the middle 60 per cent of the market—Tier 1 and Tier 2 enterprises upgrading to AI infrastructure over the next 48 months—will source locally. That is not patriotism. That is procurement economics.

What This Means for Enterprise Buyers

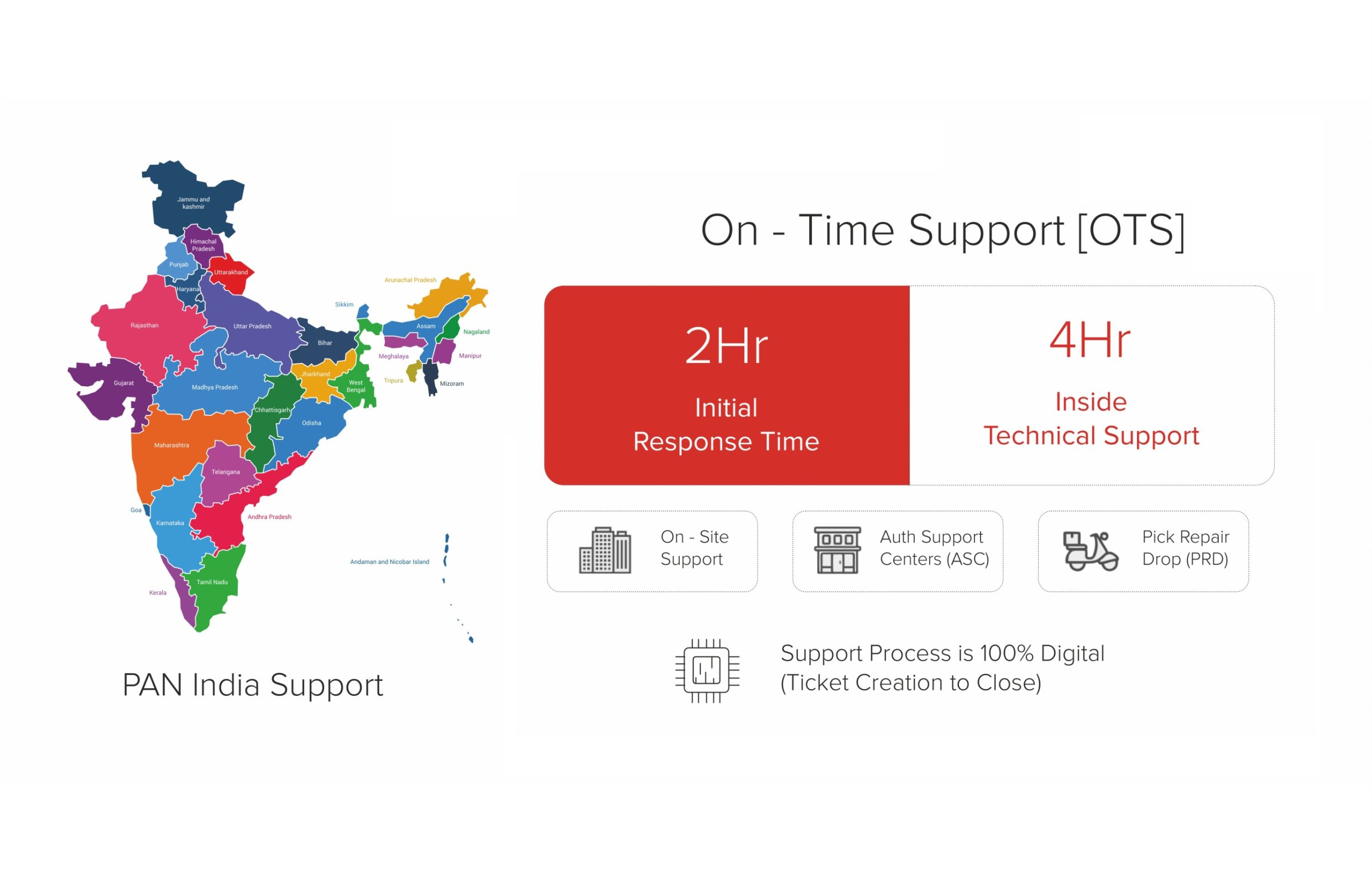

For enterprise IT buyers, the implication is direct: the calculus for on-premises AI infrastructure has shifted in favour of Indian OEMs on total cost of ownership, compliance risk, and continuity of supply. A GPU cluster sourced from an Indian OEM today carries a 12–18-month delivery and implementation timeline. A comparable cluster from a multinational vendor, if available, carries 24–28 months and requires separate negotiations on data residency, backup, and support escalation paths. For enterprises that need to deploy production AI workloads within 18–24 months—which includes most large banks, insurance companies, and government technology initiatives—the choice is now obvious.

The secondary implication concerns the nature of what is being bought. Cloud-centric enterprises have become accustomed to treating compute as a commodity; hyperscalers have trained them to believe that hardware is interchangeable. On-premises AI workloads are different. The system must be engineered for the specific mix of models, the ambient thermal environment, the power supply infrastructure, and the data centre topology of the buyer’s facility. This is bespoke infrastructure, not commodity hardware. Indian OEMs, embedded in the local enterprise ecosystem, are structurally better positioned to deliver that level of customisation than vendors operating from Silicon Valley or Shenzhen. Our playbook on building AI factories in India walks enterprise CIOs through the procurement and integration framework.

The Next Three Years

The transition from cloud-first to on-premises-first AI infrastructure will not be immediate across all Indian enterprises. Financial services and government will move fast—by end of 2027, over 60 per cent of government AI workloads will be on-premises by mandate. Retail and consumer technology will move slower; some will never move, because their AI workloads are small enough to remain cloud-efficient. But for the 8,000–12,000 enterprises in India that operate material data centres and employ AI teams, the on-premises transition has already begun. And as Indian organisations choose made-in-India hardware for strategic reasons, that transition accelerates.

The market size, the regulatory framework, and the buyer relationship ecosystem are all pointing in the same direction. Indian OEMs that have spent 14 years building supply chain depth, manufacturing capability, and enterprise relationships are now operating on a demand curve they were structurally designed for. For multinational vendors, India will remain a revenue line; for Indian OEMs, it has become a primary market. That distinction, accumulated over three years, becomes the difference between 8 per cent market share and 45 per cent market share. The $100 billion AI hardware market of 2030 will be carved up by those who understand that difference today.

Related Reading

Explore the RDP AI Workstations portfolio that anchors the Indian-OEM play in this market analysis. For the structural procurement shift driving this, see why Indian organisations are choosing Made-in-India IT hardware.

Table: India AI Hardware Market Segments — Estimated Size and Growth (2025–2028)

| Segment | Est. Market Size 2025 (₹ cr) | Est. Market Size 2028 (₹ cr) | CAGR | Key Buyers |

|---|---|---|---|---|

| Enterprise Inference | 2,800 | 9,500 | ~50% | BFSI, IT/ITeS, pharma, retail |

| Government / Defence | 1,200 | 4,800 | ~59% | MeitY, MoD, state govts, PSUs |

| Education (Schools + Higher Ed) | 600 | 2,400 | ~59% | AICTE-affiliated colleges, state school boards, NIT/IIT |

| Hyperscale Data Centres | 3,500 | 11,000 | ~46% | Adani, Jio, NTT, STT, CtrlS |

| Edge / IoT / Industrial AI | 400 | 2,000 | ~71% | Auto OEMs, logistics, smart city operators |

| Healthcare AI Infrastructure | 250 | 900 | ~53% | AIIMS, private hospital chains, MoHFW |

RDP Technologies Limited designs, manufactures, and supports IT hardware in India — desktops, thin clients, mini PCs, AI PCs, workstations, servers, and rack-scale AI infrastructure. 14 years. 100,000+ devices shipped. Over 1 million end users. 28,000 sq. ft. facility in Hyderabad. ISO 9001, PLI 2.0, MeitY and BIS registered.

Make in India. Built for an AI-Ready India. Reliability is Our Product.

If you are evaluating IT hardware for your organisation — speak with our team. No sales pitch, just an honest fit review.